Frontier Models Taskforce moves fast. Chicken welfare policy moves slow

Doing Westminster Better in September 2023

This newsletter is my ongoing attempt to explain effective altruism to Westminster, and Westminster to EA.

Since my last post, Rishi Sunak has attended the G20, we’ve had several important science policy updates, and POLITICO have introduced readers to something called effective altruism, an unusual movement influencing AI policy.

I think many readers are here for the AI news, but in my opinion the best section in this newsletter is Animal policy 3, which is a deep dive into how British news is reckoning with farmed chicken’s awful conditions. I hope you learn something.

Plenty to discuss! Let’s begin.

AI policy 1. ‘AI knows no borders’: After spy scandal, Sunak still committed to inviting China to AI Safety Summit

Two men were arrested in March on suspicion of breaking the Official Secrets Act by spying for China. One of them was a parliamentary researcher who had contact with senior Tory MPs. The news was broken by the Times on 10 September – immediately leading to questions about why MPs didn’t know earlier, and heightened criticism of Sunak’s China strategy.

Sunak raised the issue with Premier Li Qiang, representing China at the G20. He’s still committed to inviting China to his AI Safety Summit, with a Downing Street representative saying: “AI knows no borders”. An invitation for China, increasingly controversial among Sunak’s international allies, backbenchers and Cabinet, is widely supported by AI safety experts. Encouraging race-like dynamics with China seems dangerous, and Chinese involvement in international coordination on AI would be welcome.

Here’s Ian Hogarth, chair of the Frontier Models Taskforce, making the case clearly (for FT; paywalled):

“These are fundamentally global risks. And in the same way we collaborate with China in aspects of biosecurity and cyber security, I think there is a real value in international collaboration around the larger scale risks,” he said.

“It’s just like pandemics. It’s the sort of thing where you can’t go it alone in terms of trying to contain these threats.”

The China controversy is largely focussing on whether they should be officially designated a “threat”, something Sunak has resisted. Per The Times, Sunak’s Cabinet is openly split on this issue (Home Secretary Kemi Badenoch and Security Minister Tom Tugendhat are among those supporting threat designation). To understand how British politicians perceive China post-spy scandal, see articles like:

How ‘Commons China spy’ made feathers fly between the Tory doves and hawks (The Telegraph; paywalled)

Arrest of alleged spy raises questions around UK’s China policy (Financial Times; paywalled)

Shock and recriminations as Chinese spy scandal rocks UK parliament (POLITICO)

China’s RSVPed. Europe has FOMO. The big story is China, but we also have news about some EU states feeling snubbed on the AI Safety Summit. Only six of 27 EU members – “France, Germany, Ireland, Italy, the Netherlands and Spain” – look set to be invited. “The European Commission is also expected to be represented” and, from beyond Europe, “the US, Canada, South Africa, Brazil and India”.

One diplomat from an EU country that was invited says: “It’s very important to keep a regional balance if you want to have an impact beyond Europe”.

Not invited: Austria, Belgium, Bulgaria, Croatia, Republic of Cyprus, Czech Republic, Denmark, Estonia, Finland, Greece, Hungary, Latvia, Lithuania, Luxembourg, Malta, Poland, Portugal, Romania, Slovakia, Slovenia, and Sweden.

This story comes from sifted.eu; I’m not familiar with this outlet, but they were cited by POLITICO so I’ll trust them for now.

On AI summits, “no one is really sure what they are about – or who’s in charge”. So says POLITICO, taxonomising the various fora sketching out international AI governance agendas right now (the G7, the EU, the Global Partnership on Artificial Intelligence, …). Here’s their comments on the AI Safety Summit:

Last, and certainly least, is the United Kingdom. London is trying to gatecrash the global AI parade with its own meeting — slated for November 1-2 and focused on “AI safety” — that has taken almost everyone by surprise. Prime Minister Rishi Sunak is eager to promote the U.K. as a global leader in AI. But has shifted the event’s priority to so-called frontier AI, marketing jargon for the most advanced AI systems. So far, no one — including those within the British government — has a clue to what will come from this event.

But at least one organisation has thoughts on what the AI Safety Summit should be about. The Ada Lovelace Institute have set out their wishlist in an article called ‘Seizing the ‘AI moment’: making a success of the AI Safety Summit’.

AI policy 2. Frontier Models Taskforce shares progress update

(Every bold point in this section comes from this progress update).

Ian Hogarth’s Frontier Models Taskforce, has established an expert advisory board:

Yoshua Bengio

Paul Christiano

Matt Collins: Deputy National Security Adviser for Intelligence, Defence and Security

Anne Keast-Butler: director of GCHQ

Alex van Someren: Chief Scientific Adviser for National Security

Helen Stokes-Lampard: Chair of the Academy of Medical Royal Colleges

Matt Clifford: Prime Minister’s joint Representative for the AI Safety Summit, Chair of ARIA and co-founder of Entrepreneur First.

If you’re an EA reading this newsletter, Bengio and Christiano likely need no introduction.

Bengio won the 2018 Turing Award with Geoffrey Hinton and Yann LeCun for their work pioneering deep learning. Any given profile will tell you that the Award is computing’s Nobel Prize and that the three men are the ‘godfathers of AI’. He’s scientific director at the Montreal Institute for Learning Algorithms, which I understand to be one of the world’s largest AI research centres.

Christiano used to work on alignment at OpenAI, where I understand he was instrumental in developing reinforcement learning with human feedback (RLHF). He’s since left OpenAI to run the Alignment Research Centre; as well as an ambitious alignment research agenda, ARC red-teamed GPT-4 for OpenAI.

Both men are concerned about the development of extremely dangerous AIs. Bengio’s thoughts are outlined in ‘How rogue AIs may arise’, and Christiano’s in ‘My views on “doom”’.

Two quick thoughts. First: the UK is treating AI as a national security matter. Collins, Keast-Butler and von Someren are the national security apparatus. Consider also a Tweet from Hogarth: “Someone recently described 'open sourcing' of AI model weights to me as 'irreversible proliferation' and it's stuck with me as an important framing. Proliferation of capabilities can be very positive - democratises access etc - but also - significantly harder to reverse”. The UK seems more likely to securitise frontier AI models, and less likely to endorse open-sourcing them.

AI safety advocates tend to believe open-sourced model weights would be too dangerous. You don’t want everybody having this power. (You might be interested in Yann LeCun’s Senate testimony which argues against this claim).

Second: the inclusion of Stokes-Lampard – a practising GP who can see first-hand “how conversational AI tools can impact day to day medical diagnoses” – shows concern for AI ethics, especially in healthcare. The Taskforce tell us this explicitly: “Beyond national security and AI research expertise we are also excited to build an advisory board that can speak to critical uses of frontier AI on the frontlines of society”. And the FT, who have an interview with Hogath focused on the healthcare angle, write that: “weaponising the technology to hobble the National Health Service or to perpetrate a “biological attack” were among the biggest AI risks his team was looking to tackle”.

And they’ve recruited Yarin Gal and David Krueger to serve as Research Directors. Gal joins from Oxford and Krueger from Cambridge; Krueger “will be working with the Taskforce as it scopes its research programme in the run up to the summit” so may leave the group after helping to set a research agenda.

They’ll be leading a research team that’s already expanded from one technical expert with three years’ frontier AI experience to a group with over fifty years’ collective experience. The team is looking to expand by another order of magnitude. Sign up!

One thing I enjoy in Hogarth’s communications is his emphasis on public service. He praises Nitarshan Rajkumar (the Department for Science, Innovation and Technology’s first frontier AI researcher) as “a testament to what an earnest, incredibly hardworking technical expert can accomplish when they commit to public service”. Shout-out to Nitarshan! And he says to Secretary Michelle Donelan:

“These brilliant researchers [are] realising how important government is at this moment. [...] I think the AI research community is kind of realising that it will not solve these problems if government is not at the table, and is not at the table in a resourced, technically expert way. And I think they see this opportunity as very important and actually frankly critical to safety on a global basis.”

They’re partnering with AI safety orgs:

ARC Evals

Trail of Bits

The Collective Intelligence Project

The Center for AI Safety.

The Collective Intelligence Project stands out; they’ve been thinking about new governance mechanisms for the world of AI, like “alignment assemblies”. The UK recently experimented with a citizens’ assembly on climate change. Could something like this be a model for a democratic process to find the values which AIs should be aligned on? Pilot alignment assemblies looked at what questions the public had about the impacts of AI and how AIs should behave when values conflict.

Operations news: Ollie Ilott appointed Director for the Taskforce. Previously, Ilott led the Prime Minister’s domestic private office as Deputy Principal Private Secretary. (I think this means his speciality was ~chief of staff?) Hogarth, who is “grateful to the Prime Minister for releasing such a key member of the team at No.10”, wants us to see Ilott’s appointment as a costly signal that Sunak is taking AI safety seriously and responding professionally.

Hogarth also writes that Ilott “embodies” a “‘General Groves’ energy”. Groves presided over the construction of the Pentagon and the Manhattan Project. (He’s Matt Damon in Oppenheimer). If you wanted a Manhattan Project for AI safety, your wish might have just come true.

In any case, the Taskforce definitely sees itself as working fast and flexibly. Donelan says the government must recruit researchers who were in industry “yesterday”, and Hogarth says the Taskforce is “a start-up that will move fast, but [play] by the same rules as the rest of government”. Donelan and Hogarth have made a comparison to the Covid Vaccine Taskforce (hers; his).

The Taskforce motto could be ‘move fast; don’t break anything’. Why so speedy? Listen to Donelan in her speech to CogX tech festival:

Just a few months ago, you will have seen that an AI model was used to discover a new drug for liver cancer in the space of just 30 days.

Not long after, companies unveiled AI models that could predict the weather with the same degree of accuracy as the whole of our existing weather monitoring systems – and they did it 10,000 times faster.

The speed of progress is like nothing we have ever seen before.

In the space of a single human lifetime, humanity progressed from the horse and cart to launching a man into space.

We are seeing a comparable transformation in the field of AI, occurring in just under a decade. Five years ago, the most advanced AI could barely write coherent sentences. Today, they can instantly generate stunning art, they can ace the bar exam and use tools as we ourselves do.

Time is of the essence. Interestingly, Ilott – back when he was a researcher at the Institute for Government – once wrote a short piece calling for a small “War Cabinet” to make quick decisions on Brexit. (This was during Theresa May’s premiership, when everybody was worried about the ticking clock that would set off when Article 50 were triggered). This may give some insight into how he thinks decision-making should work for a fast-evolving problem like AI.

The Ilott announcement was the biggest of a few comments on operational matters. Also:

Hogarth stresses that the Taskforce is establishing foundations for technical work. He reminds us that OpenAI, DeepMind and Anthropic have already committed to give access to their models

The Taskforce is working closely with No10 Data Science. I’m not super familiar with “10DS” but you might be interested in this quick commentary from the Institute for Government.

AI policy 3. Bristol to receive AI supercomputer

The University of Bristol will host a new AI supercomputer, backed by a £900mn government investment. Simon McIntosh-Smith, Professor of High Performance Computing, will be the project lead. The AI Research Resource (AIRR) will be called Isambard-AI, after Isambard Kingdom Brunel. (Two more excellent supercomputer names waiting in the wings).

We don’t have technical details on the supercomputer, but we should assume this is what the recently reported purchase of NVIDIA chips is going to.

The government announcement comes with typical glowing quotes from Donelan, McIntosh-Smith, and Bristol’s Vice-Chancellor Phil Tyler. I’d like to highlight this one from Tyler:

“AI is expected to be as important as the steam age, with ramifications across almost every area of academia and industry. Bristol’s proud to be at the forefront of this revolution.”

Sounds pretty important! Here’s the press release.

AI policy 4. Tony Blair “not anticipating the extinction of humanity”

Famous last words? In an interview with The Metro, former Labour Prime Minister Tony Blair has said we need to remain sensitive to extreme risks from AI and that “when people put to the inventors of something like this ‘what’s the worst that could happen?’”, but that he isn’t expecting “science-fiction” outcomes like human extinction.

Blair’s influence over the current Labour Party leadership is huge. Starmer is trying to invoke Blair’s 1997 strategy and image, and – as the Economist reported recently – his think tank is extremely well-funded. Therefore, Blair’s messaging might be alarming for AI safety advocates; Rishi Sunak has so far been clearer than Keir Starmer about the existential threat.

In related news, the Labour Party has created a Shadow Minister for AI and Intellectual Property position. Reporting to the Shadow Department of Science, Innovation and Technology Minister, Matt Rodda MP will be the party’s AI expert. Rodda represents Reading (currently: Reading East; after boundary review: Reading Central).

AI policy 5. Conjecture speaks to House of Lords

In ‘crossovers you weren’t expecting’: Connor Leahy, CEO of AI lab Conjecture, has addressed the House of Lords on existential risks from AI. (See his Tweet here). Leahy said the Lords were engaged and recognised parallels to nuclear weapons. He gave three recommendations:

Liability for developers and users. What liability should look like in terms of AI has been an area of focus for policymakers internationally (e.g. featured in Senator Blumenthal and Hawley’s AI legislation blueprint). Many lawmakers seem worried about repeating the mistakes of social media

A cap on computing power. AI safety experts have been advocating for compute governance, which could build from protectionist trade policy on semiconductors, and/or be modelled on international control of nuclear material

A global AI ‘kill switch’. This is out-of-scope for the House of Lords and Leahy’s most ambitious ask. He wants “governments [to] build the infrastructure to be able to shut down powerful AI systems”. On the one hand, this is a common-sense approach to dangerous AI (‘why not pull out the plug?’ is many people’s first response to learning about AI risks), but on the other, this will require an immense degree of global coordination.

Leahy also writes, “I think there should be a complete moratorium on development of AIs using unprecedented levels of computing power.” The EA Forum has been publicly debating the value and optics of an AI pause this week.

The House of Lords is an unelected second chamber. Lords can introduce bills, but it would be unusual for a bill on a high-salience issue like AI not to start in the Commons. The Lords will then review and edit the bill. The government consists of MPs (from the Commons) and Lords, and so the Lords will have input to the government’s legislative approach; importantly, Jonathan Berry, the Minister for AI and Intellectual Property, is a Lord. His title: 5th Viscount Camrose. British politics is camp sometimes!

Conjecture’s head of governance and strategy, Andrea Miotti, has written for TIME, elaborating on the org’s views on AI safety. He calls for an international organisation to monitor and control frontier AI development, arguing that AGI is too dangerous to be private. Here’s some relevant paragraphs:

World leaders are calling for the establishment of an international institution to deal with the threat of AGI: a ‘CERN’ or ‘IAEA for AI’. In June, President Biden and U.K. Prime Minister Sunak discussed such an organization. The U.N. Secretary-General, Antonio Guterres thinks we need one, too. Given this growing consensus for international cooperation to respond to the risks from AI, we need to lay out concretely how such an institution might be built.

[…]

MAGIC (the Multilateral AGI Consortium) would be the world’s only advanced and secure AI facility focused on safety-first research and development of advanced AI. Like CERN, MAGIC will allow humanity to take AGI development out of the hands of private firms and lay it into the hands of an international organization mandated towards safe AI development.

MAGIC would have exclusivity when it comes to the high-risk research and development of advanced AI. It would be illegal for other entities to independently pursue AGI development. This would not affect the vast majority of AI research and development, and only focus on frontier, AGI-relevant research, similar to how we already deal with dangerous R&D with other technologies. Research on engineering lethal pathogens is outright banned or confined to very high biosafety level labs. At the same time, the vast majority of drug research is instead supervised by regulatory agencies like the FDA.

MAGIC will only be concerned with preventing the high-risk development of frontier AI systems - godlike AIs. Research breakthroughs done at MAGIC will only be shared with the outside world once proven demonstrably safe.

AI policy 6. EA writes about POLITICO writing about EA

Here’s a must-read if you care about AI policy: POLITICO Europe has introduced to its readers effective altruism, the movement “shaping Rishi Sunak’s AI plans”. POLITICO Europe have written about EA and AI policy before, but this was framed as an introduction and, now that AI policy articles are must-reads, could be how much of Westminster meets EA for the first time.

If you’re an EA, you probably have thoughts about whether her reportage was fair and about how the EA movement should think about our perception in the media. I’ll leave that discussion to the EA Forum.

First meeting of Biosecurity Council

Industry and academic experts have convened to advise the Science Minister on responsible bioengineering. Experts joined from universities (e.g. KCL and Cambridge), companies (e.g. GSK, DeepMind, AstroZeneca), the UK Bioindustry Association, and the Centre for Long-Term Resilience. The government messaging was balanced between optimism and caution: we want to take advantage of the bioengineering revolution, “while safeguarding against potential misuse”.

The government press release (link here) said bioengineering could “change the way we grow food, create medical treatments and produce the sustainable fuel we need to run our cars, homes and offices.” EAs will be keen to reap the rewards – food system transformation, especially to benefit animals; improved health interventions; and climate change mitigation are all key moral priorities – but especially keen to avoid downside risks. Bioengineered pathogens could pose an existential threat, and recent developments in AI have heightened the risk.

We’re so back: UK rejoins Horizon Europe, announces ARIA directors

Let’s stick with science policy news for a section longer.

The UK has rejoined the Horizon Europe scheme, in a move welcomed by scientists. This is the EU’s science collaboration initiative, which is running from 2021 to 2027. The budget is €95.5 billion. British participation was disrupted by Brexit, and rejoining the programme was a top priority for the UK’s scientific community.

The initiative’s research is grouped in several clusters. Possibly of interest to EAs are:

Cluster 1: Health, including health interventions and “pandemic preparedness”

Cluster 4: Digital, Industry and Space, including “enabling emerging technologies” and “artificial intelligence and robotics”

Cluster 8: Climate, Energy & Mobility, including evidence-based approaches to climate change (maybe even some geoengineering stuff?)

Cluster 9: Food, Bioeconomy, Natural Resources, Agriculture and Environment, including “Impact of the development of novel foods based on alternative sources of proteins”.

The new ‘high-risk, high-reward’ science research body, ARIA, has named its research directors. The Advanced Research and Innovation Agency, established in January of this year, is modelled after the USA’s DARPA and seeks to fund scientific moonshots. Budget: £800mn. Consider it a hits-based grantmaker, if that’s your thing. This is seen as a first look at ARIA’s research agenda. The directors will be:

Angie Burnett, who has been researching “the application of technology to the food security system”

Jacques Carolan, who has been researching “neurotechnologies as therapeutic tools”

Sarah Bohndiek and Gemma Bale (co-programme directors), who have been “looking at the use of optical technologies in issues including human health and climate change”

Suraj Bramhavar, who has been working to “create alternative hardware paradigms that can allow us to sustainably scale AI compute to benefit everyone in society”

David ‘davidad’ Dalrymple, who has been researching “the mathematical approaches to guarantee safe and reliable AI”

Jenny Read, who has been researching “robotics with a focus on interdisciplinarity”

Mark Symes, who has been “exploring and evaluating technologies to reduce atmospheric carbon dioxide levels”.

There’s a lot to be excited about here! Multiple pathways promise new technologies to combat climate change. Bramhavar, Dalrymple and maybe Read are working on, or adjacent to, AI alignment. And Burnett’s research into technology and food security could have implications for the alternative proteins world.

My glosses are quoting a report from James Coe at wonkhe. But I encourage you to read the ARIA press release, which details specifying, fascinating questions each director is investigating. ARIA suggest each programme will progress across: (i) questioning the status quo, (ii) bounding an opportunity space worth exploring; (iii) formulating a core hypothesis to underpin a research programme; and (iv) launching a programme for solicitation. Everybody is questioning for now, except Bramahavar who is bounding.

Coe picks out a few interesting things about ARIA:

The research pathways are dealing with high-stakes, high-scale problems like climate change, AI and food security

The directors are coming from a mix of professional backgrounds, including “more conventional academic careers with big interests, academics that have run or built companies, and programme directors that lean more into business and technology worlds but with significant academic credentials”

The social media presence is a Substack. (Hey, me too!) This suggests some of that nimble, start-up energy that the Frontier Models Taskforce wants to embody

Nature also had an insightful commentary. Cathleen O’Grady writes that:

The heavy focus on neuroscience is “completely coincidental”, per CEO Ilan Gur

Those who wanted ARIA to pick one area of focus will be disappointed. The Labour Party tabled an amendment to the bill establishing ARIA, trying to narrow its focus to net zero

Funding may wind down, with the initial £800mn covering just four years. I don’t think either Labour or the Tories have made a commitment to renewing funding.

Animal policy 1. Labour set out farming policy

Steve Reed MP, the Shadow Defra Sec, has set out the party’s agricultural policy. Reed, who recently assumed the role in Keir Starmer’s reshuffle, was interviewed by Chris Brayford for Farmers Guardian as Labour MPs celebrated the NFU’s “Back British Farming Day”. Labour is promising to “make it easier for British food producers to export to the EU by seeking to negotiate a veterinary agreement”, and to “support domestic food production” including by reforming public procurement to “make sure half of all food bought by the public sector is food that is locally or sustainably produced”.

The article empathises with farmers as victims of inflation, the climate crisis, and “an increasingly globalised food supply chain”. And it frames farmers as British heroes: “A thriving farming sector is [...] critical to safeguarding our nation's heritage, security, and economic growth.”

And I note how aligned the Labour Party’s vision is with the British Poultry Council. Griffiths was writing about “costly and disproportionate trade barriers” hurting trade with the EU, and about how “domestic production – promoting a secure supply of food grown right at home – is what will move us through this period of economic and political uncertainty”.

The Labour Party should consider the animal welfare implications of its proposals. Take reduced friction in animal products trade with the EU. With the EU dropping it’s animal welfare commitments like cage-free chicken, will that make it easier to accept low-welfare farming in the UK? Or with public procurement: can the party insist that meat bought by the public sector is high-welfare, or even that the public sector isn’t buying too much meat in line with public health recommendations?

Animal policy 2. Tories rule out meat tax which they had never supported

Rishi Sunak has a “new approach” to his party’s commitment to net zero emissions by 2050. The move, which has been widely criticised by people concerned about the environment – including within his own party, including dropping “heavy-handed measures” like “the proposal to make you change your diet – and harm British farmers - by taxing meat”.

A meat tax had never been on the agenda, and had been preemptively ruled out by multiple previous Conservative PMs.

Sunak’s climate moves suggest that he’s learning from the Uxbridge by-election and the anti-ULEZ sentiment that the British public are against climate policy that impinges on daily life. This is supported by some polling. For politicos outside the animal bubble, this is seen as Sunak moving his party to general election footing and hardening in a Trussian direction.

But for animal advocates, this is worrying. A meat tax had never been on the cards. This might be a sign that animal policy risks getting dragged into the culture wars, and that Sunak’s Conservatives are hardening against animal-friendly measures.

Animal policy 3. The news reckons with the horrors and economics of factory farming chicken

Several outlets have written about the awful conditions British chickens live and die in.

The BBC write ‘Frankenchicken, farming and the cost of living crisis’. “We think it's the right time to tell the story of how the UK became home to millions of ‘Frankenchickens’.”

Frankenchickens are “genetically selected, fast-growing breeds”. Whereas a “standard organic chicken grows to its weight for slaughter in 81 days”, a Frankenchicken takes just 35 days. Weight for slaughter is such a sickly idea to me: a chicken’s natural lifespan is years. Of course, a Frankenchicken lives with endless ailments and maladies, like lameness and heightened risk of heart failure, so its “natural”, unslaughtered lifespan would still be short – and its short life would be miserable, especially because it would almost certainly be raised in factory farm (“intensive indoor rearing”) conditions, barely seeing the sun, and crammed in with fellow chickens more than densely enough to create psychological distress and heightened pandemic risk. Frankenchickens are the chickens we fucked up. They have, as The Humane League’s Sean Gifford says, “suffering in their DNA”.

Millions of Frankenchickens are now slaughtered each year in the UK. The BBC cite Defra to tell us that “England produces 90 million chickens a month”, and they cite the Eating Better Alliance to tell us that “850 million chickens are reared for meat in the UK each year” – 95% of them suffering through intensive indoor rearing.

Animal welfare advocates have been fighting to end Frankenchicken breeding on a few fronts:

Corporate campaigns to get businesses to sign onto the Better Chicken Commitment. The BCC is a science-based, six-point checklist for chicken welfare. One of the criteria is to use “breeds that demonstrate higher welfare outcomes” – definitely not Frankenchickens

Legal advocacy to win a ruling that breeding Frankenchickens falls foul of existing animal welfare law. The Humane League sued Defra, claiming that Frankenchickens broke a law that animals can only be farmed if “they can be kept without any detrimental effect on their health or welfare”. The lawsuit failed, but the Humane League are appealing

Undercover investigations to show the public what’s happening — like the ones that motivate this BBC piece!

Why are chicken farmers doing this? The BBC acknowledge that “demand dictates what our supply chain looks like”: “the price of a chicken is roughly the same as a takeaway coffee, making the meat a popular staple for many households”. Suffering is more profitable, and that’s tragic. The farmer profiled by the BBC, who used the advocate-coined “Frankenchicken” himself, indicated willingness to move to a higher-welfare system if the economics made sense.

The FT delved into the details for their report, ‘How British chicken got caught in the country’s economic storm’ (paywalled). It wasn’t always like this. In 1950, chicken was a once-a-week luxury, and the bird that was killed for your dinner probably lived outside, genetically unmangled, for 12 weeks. Farmers exploited economic efficiencies – indoor intensive rearing, selective genetic breeding, killing them younger – to keep chicken cheap as per capita meat consumption skyrocketed. Now, poultry meat constitutes a bigger proportion of meat consumed, and millions of factory farmed Frankenchickens have borne the brunt of the UK’s changing diet.

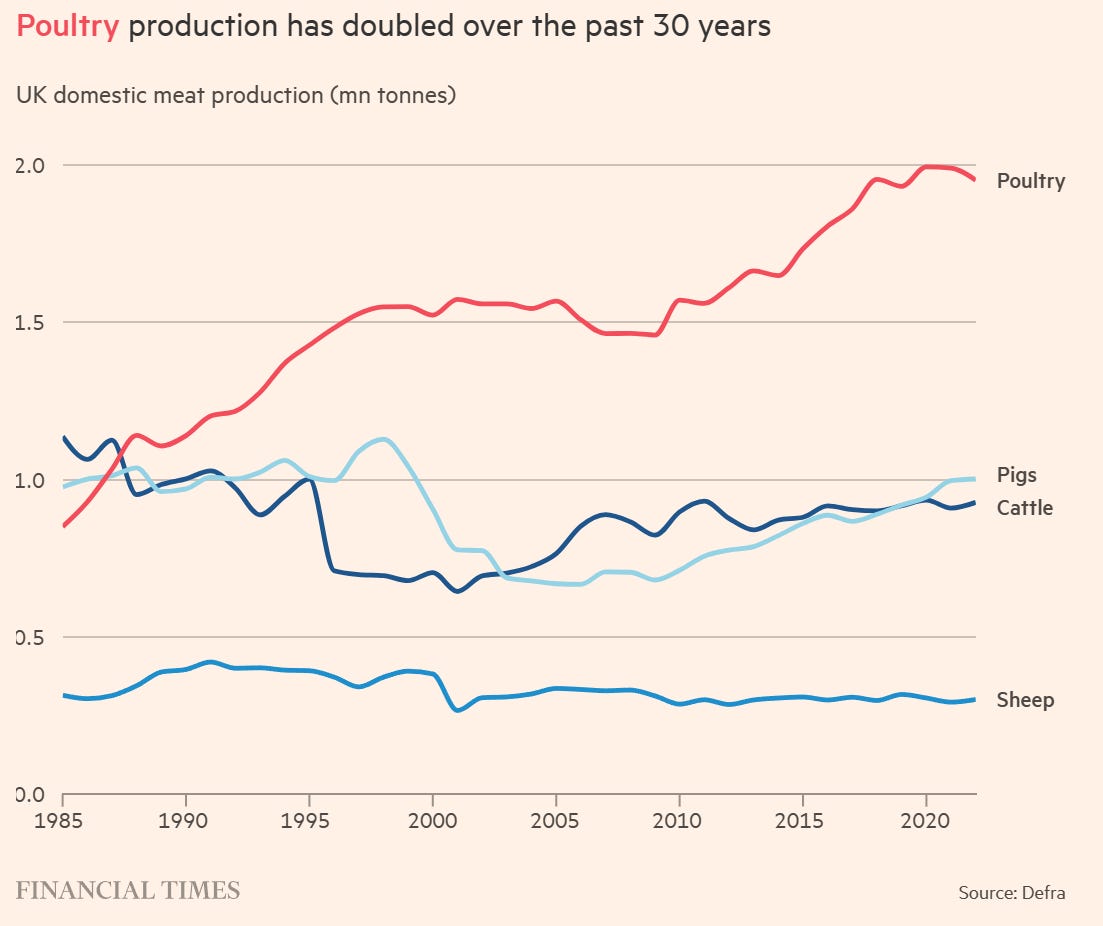

These graphs are illuminating:

Animal abuse brought farmers’ costs down. But they also brought margins down: the FT spotlight one farm with a turnover of £9mn for 5.5mn chickens (that’s revenue of £1.64/chicken). Now, chicken farmers feel like they are living on razor-thin margins, and pressured to keep prices low for retailers. Government subsidies also artificially lower the price of meat and other government spending takes the hit for meat’s externalities, like the damage to our environment and our public health.

Chicken’s low price and high rate of current consumption is a story decades in the making. We shouldn’t mistake today’s conditions for the natural state of the world – let alone an optimal state. This is an aberration: never before have so many chickens been farmed, and never before have they been farmed so cruelly. The economics are unsustainable. The equilibrium could puncture all of a sudden, but it’s hard to say when.

(This isn’t just an aberration in history, but in geography too! The UK is out-of-synch with other countries, with one of the lowest shares of income spent on food. In the G20, we have the second-lowest. This is a sensitive issue to raise in the UK during a cost of living crisis – but policymakers should be aware that our food, especially our chicken, is artificially cheap)

The FT highlight some reasons why recent years have been particularly financially stressful for chicken farmers. These are the usual suspects, “from Covid-19 and its economic after-effects to Brexit and the war in Ukraine”. “Production of broiler chickens [...] dropped 11.4 per cent in July compared with 12 months ago”. Perhaps this is the moment of crisis which, like a lightning strike, will suddenly reorder the chicken agri-industry.

The piece was filed from Lyonshall, Herefordshire, and weighed in on the environmental damage chicken farming has wrought there:

The sheer volume of chicken produced in the UK has become an environmental issue, notably in the lush Wye Valley, on the England-Wales border, where phosphates from chicken manure have contributed to the growth of harmful algal blooms in the river Wye. The algae kills off the natural river weed that serves as nurseries for fish and is contributing to the decline of endangered species.

“That area has become a cesspit of poultry production,” says Tim Lang, emeritus professor of food policy at City University, who advocates for processing to be decentralised and dispersed across regions to prevent pollution. “The ecological damage has been an illustration of anarchic — not orderly — capitalism.”

The FT aren’t the only ones worried about the River Wye.

The Telegraph reports on ‘The true cost of cheap chicken’, including environmental externalities at Wye. They too frame the UK’s relationship with chicken today as historically aberrational. What a weird moment in time, when “pork and beef consumption [in wealthy countries] has remained unchanged since 1990 but chicken’s has increased by 70 per cent and continues to grow”, when over “40 per cent of the meat we eat in Britain is chicken”, when “the UK chicken restaurant market will go from £2.3 billion (2022) to reach £2.7 billion in 2027”.

What are the consequences? “[E]xperts suggest that giving chicken a free pass has increased the incidence of avian flu, caused ecological devastation, and may be driving antibiotic resistance.”

Weakening birds’ immune systems and then packing them in sheds together exposures our chickens and ourselves to possible pandemics. More antibiotics go to farm animals than people; we sacrifice the potency of our medicines at the altar of cheap meat. And the run-off from industrial poultry farming has polluted rivers and done damage to biodiversity. Natural England recently downgraded the River Wye from “unfavourable and improving” to “unfavourable and declining” due to the continuing pollution problems. The National Farmers Union said blaming farming was unfair, but 24 million chickens (a quarter of British broilers!) are being raised in the river’s catchment area and an academic study has pinned 70% of the phosphate in the River Wye on agriculture (source). Last month, chicken processor Avara said it would stop selling chicken manure to be used as fertiliser in the region due to pollution concerns.

The River Wye problem has received attention in local news. The Hereford Times published reader’s letters under the titles ‘Chicken farming is ruining our countryside and river’ and ‘Here's why Herefordshire chicken numbers must be slashed’. This follows a period of national attention on river pollution; water firms and the government have been roundly criticised for failing to cope with the issue. MPs and MP wannabes have been posing in their wellies.

Something interesting in the Telegraph: they emphasise that, when talking about differential increases in the rates of increased chicken vs pork/beef/etc consumption, we’re concerned with elasticity and substitutability between meats. I think a lot about society exchanging meat for meat-free meals (whether that’s alternative proteins or straightforwardly vegan meals), but exchanging chicken for other meats might be more tractable and might matter a lot too, if fewer animals are killed and each animal suffers less.

Everybody wants something to happen with food policy. Richard Griffiths, Chief Executive of the British Poultry Council, has written an op-ed for Policy Home on the chicken supply chain crisis:

Dithering and indecision over matters of basic food safety and food security by pushing back the implementation of food import controls for a fifth time reflects a concerning pattern of governance that must urgently be addressed – particularly should Britain still want a poultry industry this time next year

Will the government respond with food policy reforms? The last decade of food policy has been half-hearted. The Food 2030 strategy was introduced by Gordon Brown’s government in 2010 but dropped by the coalition that same year. The FT say 2019’s National Food Strategy “was largely watered down, delayed or ignored”. Policy changes come with a crisis, says the FT, and “[f]or poultry farmers, the crisis is here, but there is no sign of a solution”.

For chickens, too.

So: how should food policy reforms take animal welfare into account? I don’t have the solutions, but here are some broad principles for policymakers to consider:

Most (land farm) animal welfare is chicken welfare. These three pieces contain some astronomical figures about chicken: in recent decades, this has become the UK’s most popular meat. Due to factory farming and selective breeding, I’m confident the average farmed chicken has a worse life than farmed mammals (not that they have flourishing lives, by any means). And due to the small animal problem, millions of them are living through suffering

Stronger animal welfare laws would be popular. Linsey Smith, the BBC’s rural affairs correspondent, says leaked footage from farms used to “only highlight illegality or the abuse of animals”, but is now “showing common practices, which are legal”. Throughout her piece, there’s a sense that the laws on animal welfare are lagging behind popular opinion

We will have to face up to the real price of chicken. The BBC, the FT and the Telegraph all portray the artificially low price of chicken as soon-to-buckle under the weight of economic reality. There could even be a rare opportunity for agreement between farmers and animal welfare advocates here

And that might mean reducing meat consumption. The Telegraph quote that Rob Percival (head of food policy for the Soil Association, author of The Meat Paradox) saying that making chicken farming sustainable and higher-welfare would push the average person’s chicken consumption down by 70% or more.

And with that, of course, we’re back where we started: a policy that reduces meat consumption (even indirectly) will be unpopular with farmers, which probably means unpopular with Labour, and certainly means unpopular with Sunak. Here’s the essential dilemma of policymaking for animal advocates.

Let me link here to the Social Market Foundation’s Aveek Bhattacharya, who writes “Our research shows voters want to cut meat consumption. Labour policy must reflect this” for Labour List. (I spotlighted the research last week). The SMF have previously advised policymakers that “it is clear that welfare issues among farmed animals is overwhelmingly about chicken”.

Animal policy 4. Scottish salmon farming under scrutiny too

It’s not just chickens living and dying in terrible conditions in Britain. The Guardian has detailed the conditions of farmed Scottish salmon, spotlighting an investigation from vegan charity Viva!

Salmon farmed in Loch Torridon, Loch Carron and Loch Kishorn in the north-west Highlands are infested with sea lice (Lepeophtheirus salmonis), which feed on the fish’s skin and can kill them. The lice counts are underreported and underappreciated, but hardly secret. At one lake, the official count says “weekly average female adult sea lice per fish” is up to 2.14. At another, up to 5.4. But treatments are supposed to start at just 0.5 female adult sea lice per fish.

Scotland is one of the world’s largest exporters of salmon, and this fish is an increasingly large proportion of the animals farmed in the UK.

Animal policy 5. Social media, the American Bully XL, and our attitude to animals

New law: Social media platforms must prevent users seeing animal torture footage. (Guardian) This is part of developments on the Online Safety Bill, and comes after a BBC investigation uncovered a network of monkey torturers. I think this is a good law, but it’s revealing how governments condemn animal abuse that’s associated with charismatic, so-like-us, unfarmed animals – but sponsor and subsidise the animal abuse that makes meat of fellow creatures.

The UK bans the American Bully XL. (Guardian) This is the first dog breed to be banned since 1991; it was the most common breed to be involved in human deaths. Note that this is an extremely small-scale problem (single digits of deaths; each one individually tragic, but not an important pattern) and therefore out-of-scope for EA. But again, the story’s interesting because it reveals something about how the UK relates to animals. We have a sense that some selective breeding has had undesirable consequences, and we are comfortable banning it. Maybe this matters for UK attitudes to Frankenchickens. Maybe not.

But for the grace(?) of Brexit: EU takes up x-risk, drops animal welfare

We’ve all seen EA increasing its focus on existential risk from AI, perhaps at the expense of cause areas like animal welfare. Now it looks like the EU might be following this trend. Ursula von der Leyen announced at her State of the Union 2023 address that the EU was concerned about AI x-risk, directly quoting the Centre for AI Safety’s Statement on AI Risk, “Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

However, she also announced that the EU was dropping important animal welfare wins including a move away from cages for farm animals. This is a significant blow for the animal advocacy movement. Here’s the Financial Times (paywalled):

The EU is considering scrapping plans to impose regulations designed to improve animal welfare in the farming industry over concerns about the impact it could have on food inflation, according to senior officials.

The European Commission had promised to act after public pressure to stop practices such as the use of cages for livestock, the killing of day-old chicks, and the sale and production of fur.

But concerns that the proposed changes could add to food costs, which rose sharply after Russia invaded Ukraine last year, have led Brussels to reconsider the plans.

Thanks for reading

I bet this is of interest too…

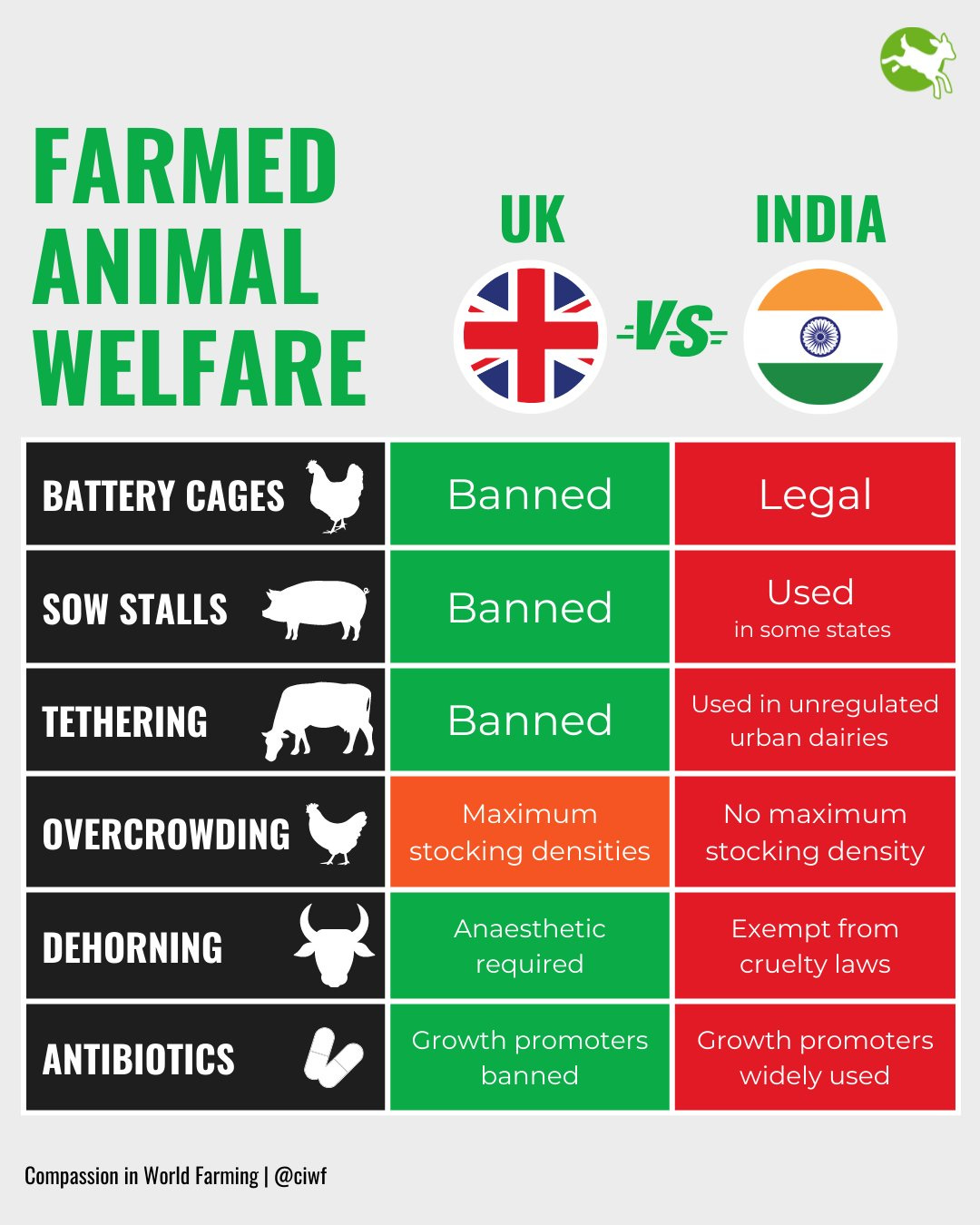

Give or take a Chinese spy, the biggest story from the G20 was a potential trade deal between the UK and India. It now looks likely that a deal will be agreed by Diwali (source; paywalled). Most media coverage focused on immigration: India’s condition for a deal is more VISAs. This issue is important, and strikes at high-emotion, high-salience issues in British politics like migrant policy, Brexit and racism. It’s also hugely relevant to global health and development: the consequences of immigration are complicated, but we know it improves the lives of those who migrate and improves the economies of their new homes. But I also want to point out the animal welfare consequences – look at this infographic from Compassion in World Farming:

This story, “How a chicken farm sparked a global animal rights movement backed by Spike Milligan”, is a review/teaser for a book about the history of Compassion in World Farming. Something interesting: there’s a focus on EU sentience legislation as a particular win for the organisation. I’m uncertain but optimistic about the expected value of sentience legislation; see Animal Ask’s “Does sentience legislation help animals?”

Also, three stories from The Telegraph:

“France is more supportive of us than Britain, says UK nuclear startup”

“Astronaut Tim Peake backs plans for solar farms in space”. The Dyson sphere begins now?

“Britain plots labels for deepfake pictures and videos in crackdown on wild west AI”.

Emergent capabilities: Different AIs have come to different conclusions about whether a painting (the de Brécy Tondo) was made by Raphael. These AIs use facial recognition technology. Also, using AI to select the embryo (should I say zygote, or another word?) for IVF treatment is significantly more effective than a human doctor. Crazy.

A newsworthy vegan: Dipping out of UK news, I’d like to celebrate DxE activists who have received coverage in The Atlantic – “Radical Vegans Are Trying To Change Your Diet” – as Wayne Hsuing faces trial for open rescue.

London vegan restaurant rec: Tofu Vegan is a great, small chain of Chinese restaurants!

I’m aware that I’m underreporting on global health and development news right now. That’s a reflection of my personal news intake – if anybody has GHD news which would be good to share in my next newsletter, please ping me at bjmnstevenson@protonmail.com